M.2 NVMe vs Standard NVMe for Hong Kong Servers

In modern hosting environments, choosing between M.2 NVMe and standard NVMe is less about marketing language and more about topology, airflow, maintenance windows, and failure domains. For Hong Kong server deployments, where latency-sensitive services, dense racks, and always-on workloads are common, storage form factor becomes a design decision rather than a shopping checkbox. Although both options speak the NVMe protocol over PCIe, they do not behave the same way inside a production server, especially when you care about thermals, hot service, and long-run operational consistency.

Why this comparison matters in a Hong Kong server context

Hong Kong infrastructure is often selected for regional reach, low-latency connectivity, and business-critical service delivery. That means storage is expected to do more than post fast benchmark bursts. It needs to remain predictable under queue pressure, survive sustained activity, and fit into a server lifecycle that includes replacement, scaling, and remote hands. In that environment, the M.2 NVMe versus standard NVMe question is really about architecture.

- Do you need easier field replacement?

- Do you expect sustained write-heavy or mixed workloads?

- Will the server be expanded later?

- Is the platform optimized for front-access storage service?

- Will the system live in hosting or colocation racks with limited intervention time?

If the answer to several of those is yes, then the physical implementation of NVMe matters as much as the protocol itself.

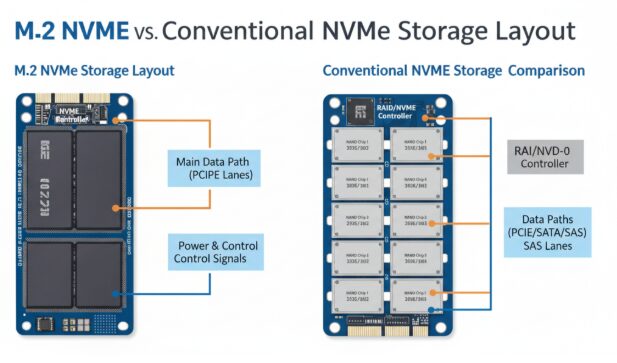

First principle: NVMe is a protocol, M.2 is a form factor

A common mistake is to compare M.2 NVMe with NVMe as if they are mutually exclusive categories. They are not. NVMe defines the command and transport model for non-volatile storage over PCIe, while M.2 defines a physical form factor. Standard NVMe in server discussions usually refers to server-oriented form factors such as front-access drives, add-in cards, or other enterprise-friendly implementations designed around serviceability and thermal control. Industry documentation consistently treats NVMe as protocol-level technology that spans multiple physical shapes, including M.2 and server-centric alternatives.

That distinction is the foundation of good storage planning. Once you separate protocol from package, the discussion becomes clearer:

- Protocol determines how efficiently the host communicates with flash storage.

- Form factor influences power envelope, cooling path, replacement method, and density planning.

- Server suitability depends on the combination of both.

What M.2 NVMe does well

M.2 NVMe is attractive because it is compact, direct, and often easy to integrate into systems where board space is available. In lower-complexity deployments, it can be a clean way to add fast local storage without introducing additional drive cages or cabling paths. For lightweight hosting nodes, utility systems, edge services, or boot-oriented roles, that simplicity can be useful.

- Small physical footprint

- Cleaner internal layout in compact systems

- Reduced mechanical complexity

- Suitable for lighter-duty or specialized roles

- Practical for deployments where manual replacement is infrequent

For technical teams, the geeky appeal of M.2 is obvious: fewer layers, direct motherboard integration, and a compact path to NVMe performance. In lab builds, dev clusters, and low-density utility nodes, that can be entirely reasonable.

Where M.2 NVMe becomes less ideal in servers

The same traits that make M.2 elegant in compact systems can become liabilities in production servers. The module is physically small, typically mounted on the board or an internal carrier, and often lives in a thermal neighborhood crowded by CPUs, memory, and network interfaces. Under sustained activity, heat handling becomes harder, and service operations can become slower because the drive is not always designed for quick front-access replacement.

From an operational viewpoint, these are the pressure points:

- Thermal headroom is usually tighter.

- Hot service behavior is often less convenient.

- Access may require opening the chassis.

- Expansion options can be limited by motherboard layout.

- The drive position may not align with ideal airflow paths in dense racks.

For a single developer box, this may be fine. For a production server that supports customer traffic, scheduled maintenance, or remote recovery, it is a different story.

What “standard NVMe” usually means in server design

When engineers say standard NVMe in a server conversation, they usually mean a more serviceable server-oriented implementation: front-access drive bays, enterprise add-in cards, or other chassis-friendly forms intended for data center operation. These designs are built around practical concerns such as hot-plug behavior, enclosure management, manageable thermals, and easier replacement.

That matters because data center storage is not evaluated in isolation. It sits inside a whole operational stack:

- Server chassis airflow

- Backplane and lane routing

- Monitoring and enclosure management

- Swap procedures and downtime policy

- Rack density and service workflow

Server-friendly NVMe form factors generally fit that stack more naturally than board-mounted modules.

Thermals: the hidden variable many buyers underestimate

Thermals are where many storage decisions stop being theoretical. In short tests, both M.2 NVMe and standard NVMe can look fast enough. In a live server, however, storage performance is shaped by temperature stability, power delivery, and how the device interacts with the chassis airflow model. Technical references from storage standards groups note that server-oriented SSD forms are designed with stronger attention to serviceability, power, and thermal management.

For Hong Kong hosting deployments, this issue becomes more practical than academic. Dense environments, continuous traffic, and mixed read-write patterns can expose thermal weaknesses quickly. A storage device that performs well in a desktop-like thermal envelope may behave differently in a compact multi-device server.

- M.2 often relies on localized cooling conditions.

- Server-oriented NVMe is usually easier to align with front-to-back airflow.

- Thermal predictability supports steadier latency behavior.

- Better heat handling reduces the chance of performance throttling during sustained work.

If your workload is bursty and light, thermal limits may never become visible. If your workload is persistent, queue-rich, or write-active, they often will.

Serviceability and uptime: where server form factors usually win

Uptime is not just a software property. It is also a hardware accessibility property. If a failed drive requires opening the chassis, changing airflow covers, or touching adjacent components, the replacement event becomes more intrusive. Standard NVMe server implementations are generally favored in environments where field service, controlled replacement, and operational continuity matter.

For this reason, teams running customer-facing services often prioritize:

- Front-access replacement paths

- Cleaner maintenance procedures

- Reduced hands-on time per incident

- Lower disruption risk during drive swaps

- Better compatibility with monitored storage operations

This is especially relevant in remote hosting and colocation scenarios, where every intervention may involve scheduling, ticketing, and coordination with on-site staff.

Performance is not only about speed, but about behavior

Engineers rarely care about a storage device only because it is fast. They care because it behaves well under real load. That means stable latency, consistent response under mixed IO, and fewer surprises when the server is busy. Both M.2 NVMe and standard NVMe use the same protocol family, so the real-world difference often comes from implementation quality, thermal constraints, controller power budget, and server integration.

When evaluating storage for production hosting, ask questions like these instead of chasing headline numbers:

- How does the drive behave during sustained IO rather than short bursts?

- How easy is it to cool in a populated chassis?

- Can it be replaced with minimal disruption?

- Does the platform support healthy monitoring and management workflows?

- Will this layout still make sense when the server is upgraded or repurposed?

That mindset leads to better infrastructure decisions than raw benchmark thinking.

Which workloads fit M.2 NVMe better

M.2 NVMe can still be the right answer in several server cases. The key is to match it to roles where compactness and simplicity are useful, but serviceability limits are acceptable. Not every server is a heavily loaded database node. Some systems are light, specialized, or operationally isolated enough that M.2 makes perfect sense.

- Boot or auxiliary storage roles

- Lightweight web hosting nodes

- Development and testing systems

- Edge or appliance-style deployments

- Utility services with modest write pressure

In these cases, M.2 NVMe can deliver a neat and efficient build, provided the platform offers reasonable cooling and you accept the service model.

Which workloads fit standard NVMe better

Standard NVMe is usually the safer default for production-heavy workloads, particularly when the server is expected to stay online, scale cleanly, and remain easy to maintain. If your stack includes databases, virtualization, caching layers, analytics pipelines, or high-concurrency services, server-oriented NVMe layouts tend to align better with the job.

- Primary storage for business applications

- Write-active transactional systems

- Virtualization hosts

- High-concurrency content platforms

- Services where swap speed and operational continuity matter

In short, if storage is mission critical rather than merely present, standard NVMe generally offers the more resilient path.

A practical decision framework for hosting teams

For infrastructure engineers, the easiest way to choose is to think in layers. Do not start with form factor preference. Start with operational constraints, then map them to storage design.

- Define the workload profile: boot, cache, database, VM store, or mixed service.

- Check service expectations: planned downtime tolerance, replacement policy, remote intervention limits.

- Review chassis behavior: airflow path, slot layout, and physical access pattern.

- Consider lifecycle: future expansion, migration, and parts replacement workflow.

- Choose the NVMe form factor that introduces the least long-term friction.

This approach avoids the classic mistake of selecting storage only for initial deployment convenience.

Common myths to avoid

- Myth: If both are NVMe, they are effectively the same in servers.

Reality: Protocol parity does not erase differences in thermals, access method, and service design. - Myth: Smaller always means more efficient.

Reality: Smaller can also mean tighter thermal margins and less convenient maintenance. - Myth: Peak performance tells the whole story.

Reality: Sustained behavior and recoverability matter more in hosting. - Myth: M.2 is wrong for servers.

Reality: It is viable for the right roles, just not ideal for every production pattern.

Final recommendation

For Hong Kong hosting infrastructure, the better choice between M.2 NVMe and standard NVMe depends on whether you optimize for compact integration or operational resilience. If the server is light-duty, role-specific, or not expected to undergo frequent field service, M.2 NVMe can be efficient and technically clean. If the server handles core applications, mixed IO, sustained activity, or needs easier maintenance, standard NVMe is usually the better engineering decision. The smartest selection is the one that fits airflow, uptime policy, and service workflow, not just the one that looks fastest on paper.