Cloud GPU vs US Dedicated Server

As enterprise computing demands surge—from AI model training and rendering to big data analytics—tech teams face a critical dilemma: choosing between cloud GPU resources and US dedicated servers. This guide cuts through the noise by aligning with US-specific needs like data compliance, bandwidth efficiency, and latency control, helping technical professionals make decisions that balance performance and cost.

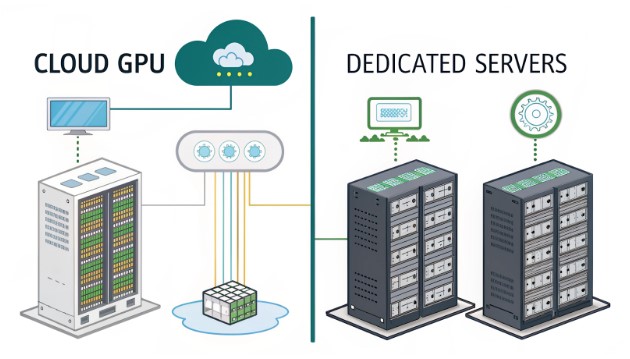

Foundational Understanding: Differentiating Two Computing Workhorses

What is a US Cloud GPU Server?

A US cloud GPU server refers to virtualized GPU resources hosted on cloud infrastructure located within the United States, offering pay-as-you-go access to graphical processing power. Its core attributes include:

- Elastic scalability to scale up or down based on real-time demand

- Hands-off hardware maintenance, with infrastructure management handled by the provider

- Rapid deployment capabilities, leveraging US-based nodes for reduced latency in domestic operations

What is a US Dedicated Server (Including GPU-Equipped Models)?

A US dedicated server is dedicated hardware deployed in US-based data centers, featuring exclusive GPU components for intensive computing tasks. Key characteristics are:

- Dedicated computing power with no resource sharing or virtualization overhead

- Ultra-low latency due to direct hardware connectivity

- Dedicated data isolation, ideal for meeting stringent US regulatory requirements

Key Distinction

The primary divide lies in resource ownership and deployment: cloud GPUs offer virtual, on-demand access, while dedicated servers provide exclusive, hardware-based computing within US data centers.

Core Comparison: Cloud GPU vs US Dedicated Server

| Comparison Dimension | US Cloud GPU Server | US Dedicated Server (with GPU) |

|---|---|---|

| Computing Elasticity | On-demand scaling for short-term peaks; no long-term commitment | Fixed capacity requiring advance configuration planning |

| Cost Structure | Pay-as-you-go (hourly/monthly) pricing; higher costs for prolonged high loads | Upfront hardware investment + colocation fees; more cost-effective for long-term use |

| Latency Performance | Network-dependent, slightly higher than dedicated servers due to virtualization | Minimal latency via direct hardware access; optimal for US domestic users |

| Compliance & Data Security | Multi-tenant environment; requires verification of US compliance standards | Dedicated isolation supporting strict regulations like CCPA and HIPAA |

| Maintenance Complexity | Provider-managed hardware and operations; no in-house IT required | Requires internal or third-party data center maintenance (e.g., hardware troubleshooting) |

| Use Case Alignment | Short-term computing spikes, testing environments, elastic workloads | Stable long-term loads, latency-sensitive applications, high-isolation requirements |

US Server Selection: 5 Critical Decision Factors

Business Scenario Alignment

Match your workload to the platform’s strengths:

- Choose cloud GPU for: AI/ML prototyping, short-duration rendering, US-based businesses with fluctuating traffic (e.g., seasonal sales surges)

- Choose dedicated server for: Long-cycle AI training, latency-critical applications (e.g., US-focused gaming servers), financial transaction systems, healthcare data processing

Cost Budget Calculation

ROI varies based on usage duration:

- Short-term projects (<6 months): Cloud GPU avoids upfront hardware costs, offering better value

- Long-term stable operations (>1 year): Dedicated servers + colocation deliver higher ROI, as hardware depreciation + hosting fees outpace cloud expenses

Compliance & Data Sovereignty

US regulatory frameworks demand careful consideration:

- For CCPA/HIPAA compliance: Prioritize dedicated servers (direct data control and dedicated storage)

- For standard business needs: Cloud GPU suffices, as leading providers adhere to US compliance requirements

Performance Requirements

- Latency-sensitive tasks (e.g., US real-time applications): Dedicated servers eliminate virtualization overhead for faster response times

- Fluctuating computing peaks: Cloud GPU enables instant scaling without idle hardware waste

Maintenance Resources

- Limited IT expertise: Cloud GPU offloads infrastructure management to the provider

- Available IT teams or data center partnerships: Dedicated servers allow custom configurations (e.g., GPU upgrades) and granular control

Practical Framework: 3 Steps to US Server Selection

- Clarify Core Requirements

- Document computing scale (GPU specifications/quantity), operational duration, latency thresholds, and compliance mandates

- Identify non-negotiables (e.g., data residency in US soil, maximum acceptable downtime)

- Evaluate Cost ROI

- Compare monthly cloud GPU pricing against dedicated server hardware costs + colocation fees over 1-year and 3-year horizons

- Factor in indirect costs (e.g., IT labor for maintenance, potential downtime from scaling)

- Test and Validate

- Cloud GPU: Utilize provider free tiers to test latency, stability, and compatibility with US-based workloads

- Dedicated server: Request trial access from US data centers to verify real-world performance and connectivity

US-Based Selection Case Studies

- AI Startup: Needed short-term model testing and prototype development → Adopted US cloud GPU for flexible scaling and zero upfront costs

- Gaming Developer: Required stable long-term hosting for US players with sub-50ms latency → Selected US dedicated GPU servers for dedicated performance

- Research Institution: Mixed workloads (core training + auxiliary computing) → Combined dedicated servers for critical tasks and cloud GPU for supplementary processing

Common Pitfalls to Avoid

- Misconception 1: “Cloud GPU is always cheaper” → Prolonged high loads make dedicated servers more cost-effective due to recurring cloud fees

- Misconception 2: “Dedicated servers guarantee low latency” → Proximity to US users is key—choose data centers near your target audience

- Misconception 3: Ignoring US bandwidth costs → Cloud GPU cross-region data transfer fees add up; dedicated servers offer customizable bandwidth packages

Conclusion & Actionable Recommendations

There’s no one-size-fits-all solution—successful US server selection hinges on aligning workload characteristics, cost constraints, and compliance needs. For technical teams:

- Opt for US cloud GPU if: You need short-term elasticity, have limited IT resources, or operate fluctuating workloads

- Opt for US dedicated server if: You require long-term stability, ultra-low latency, strict data isolation, or compliance with US regulations like HIPAA

By following the framework outlined, tech professionals can avoid overprovisioning, reduce unnecessary costs, and ensure their computing infrastructure supports US-based operations effectively. Whether leveraging cloud GPU flexibility or dedicated server reliability, the right choice aligns with both immediate needs and long-term technical goals—supported by server hosting and colocation options tailored to the US market.